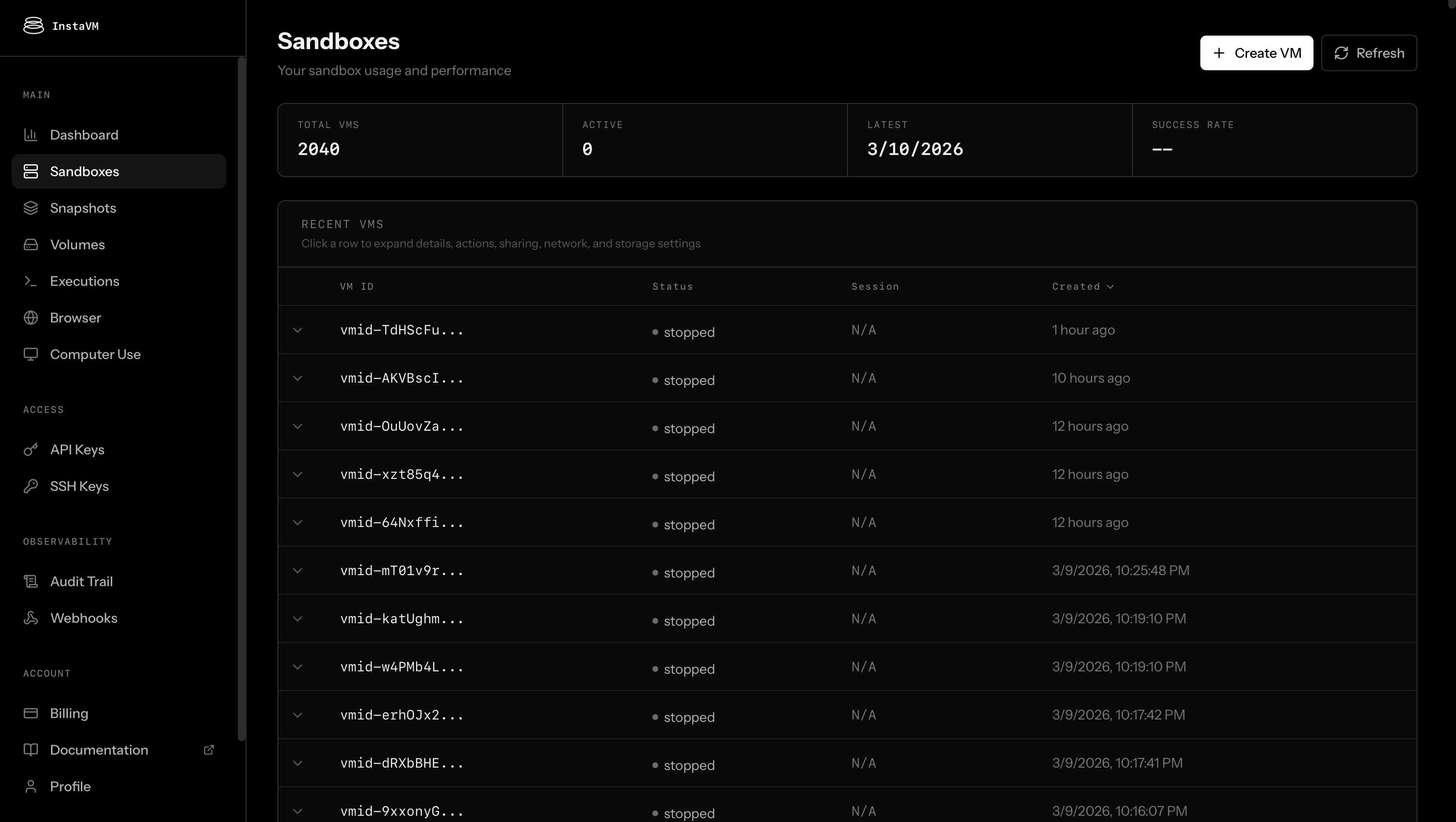

Today we're launching the public beta of InstaVM. Every new account gets $50 in free compute to deploy your agents, no credit card required.

Point your agent at ssh instavm.dev, or if you're a human, sign up at instavm.io and grab an API key to get started.

Either way, you get a full Linux VM with sudo available in under 200ms. Your own kernel, filesystem, network stack, hardware-level isolation. Use it, tear it down, spin up another. That's the basic building block. Everything else we built sits on top of it.

Prototyping agents is easy. Deploying them to production with security and controls is still hard.

You can vibe-code a working prototype in an afternoon. You can wire up a complex agent that browses the web, writes code, calls APIs, and makes decisions. The hard part is making it run somewhere real, with the isolation, permissions, observability, and controls that production demands. That's a lot of infrastructure grunt work that has nothing to do with the agent itself. So we built InstaVM to handle it. Built for agents and humans from day one.

Here's what you get:

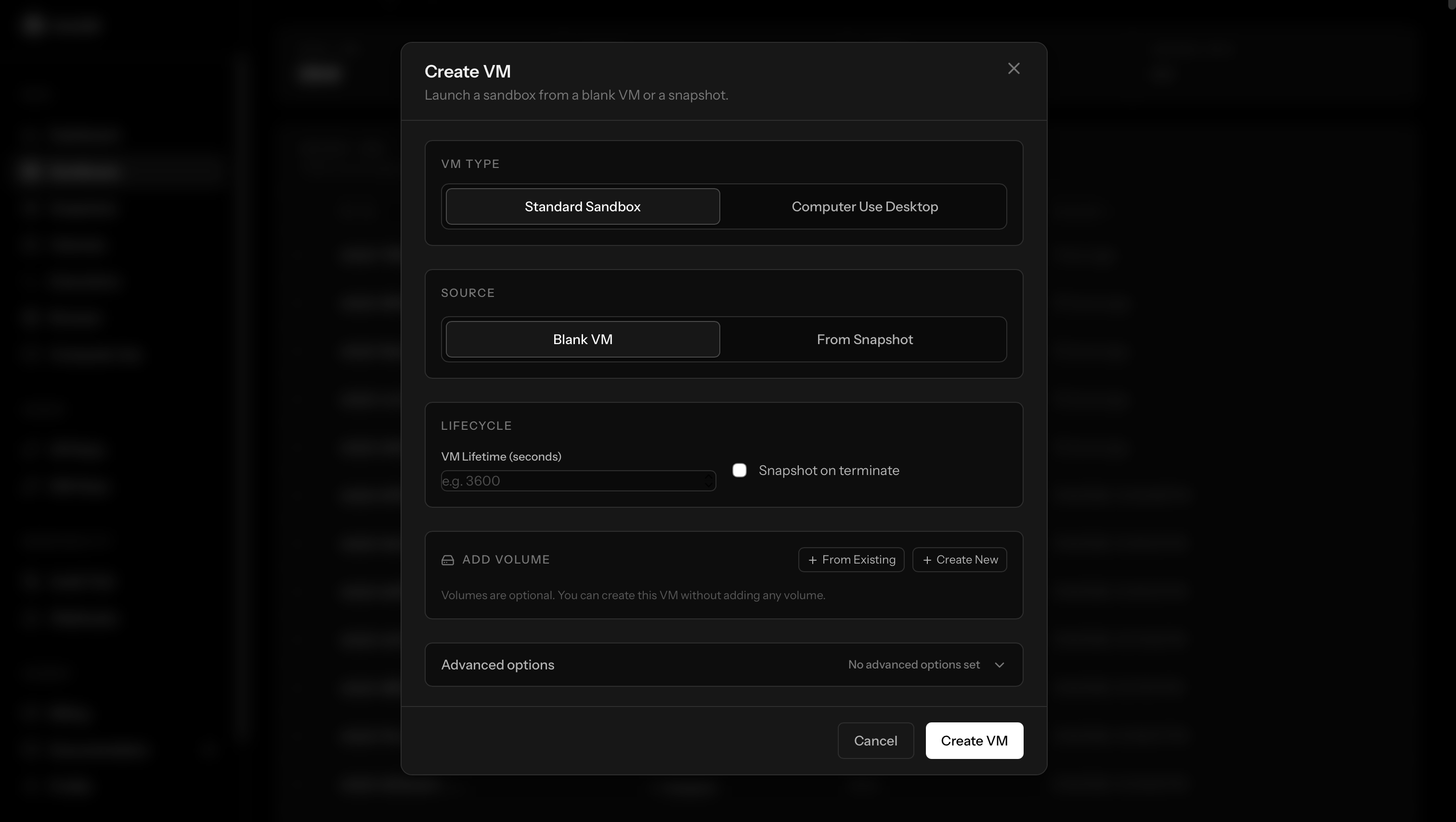

Full VMs, fast. Each agent gets its own isolated Linux environment in a time comparable to an average HTTP request. Configure vCPU and RAM to fit your workload. Install whatever you want. It's your machine.

Snapshots and clones. Bring your own OCI image or set up an environment from scratch (clone repos, install packages, configure tools), then snapshot it. Every new VM boots from that snapshot in under 500ms. Want to run 200 eval prompts in parallel? Clone a snapshot 200 times. Want every user request to get its own isolated agent? Spin one up per request. The setup cost is paid once, and each clone is a fresh, isolated environment.

Persistent volumes with checkpoints. Volumes give you storage that outlives any single VM. The interesting part: named checkpoints. Snapshot your volume state as "pre-experiment," run a destructive workflow, roll back if it goes wrong. Branch your data the way you branch code.

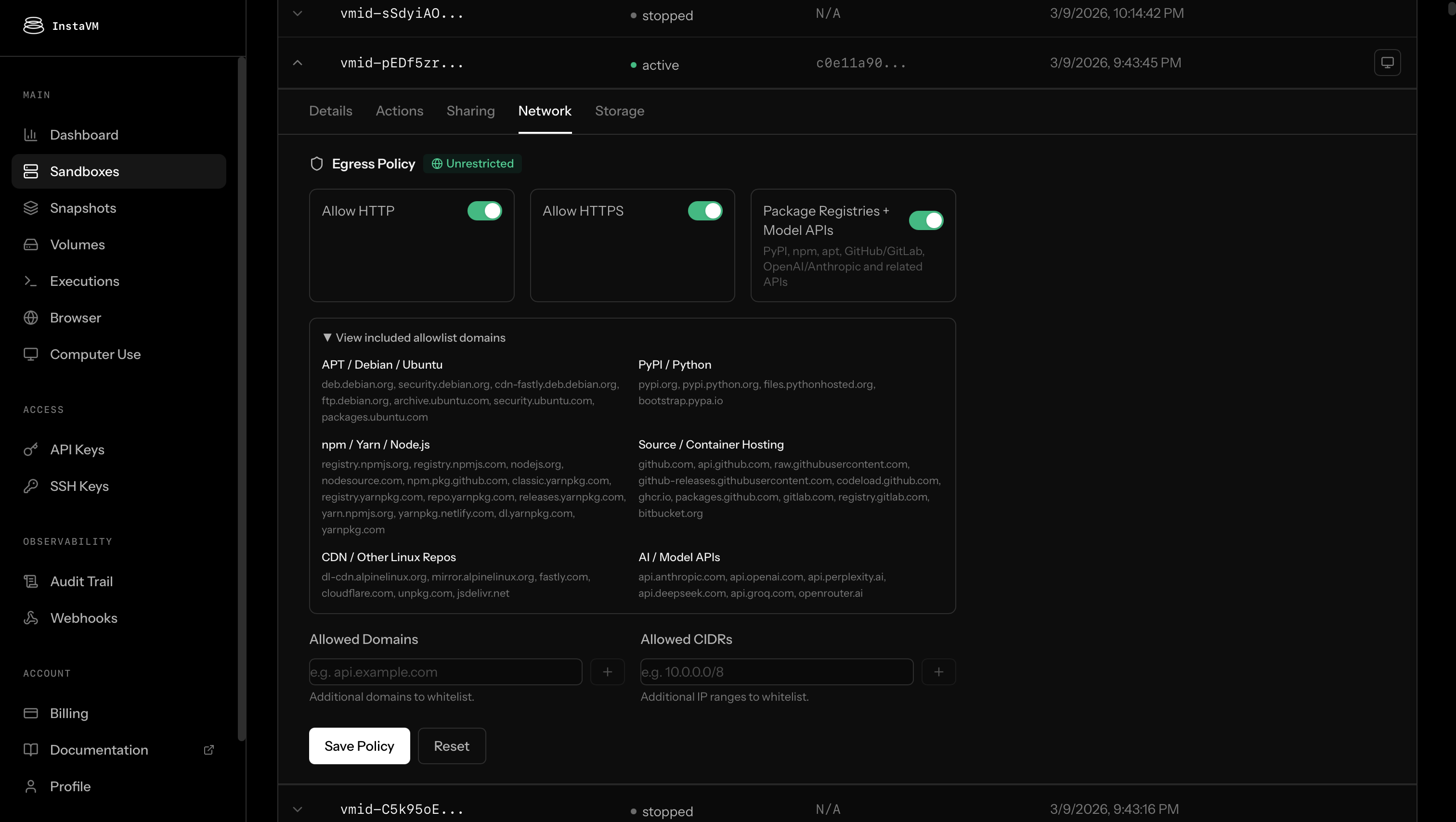

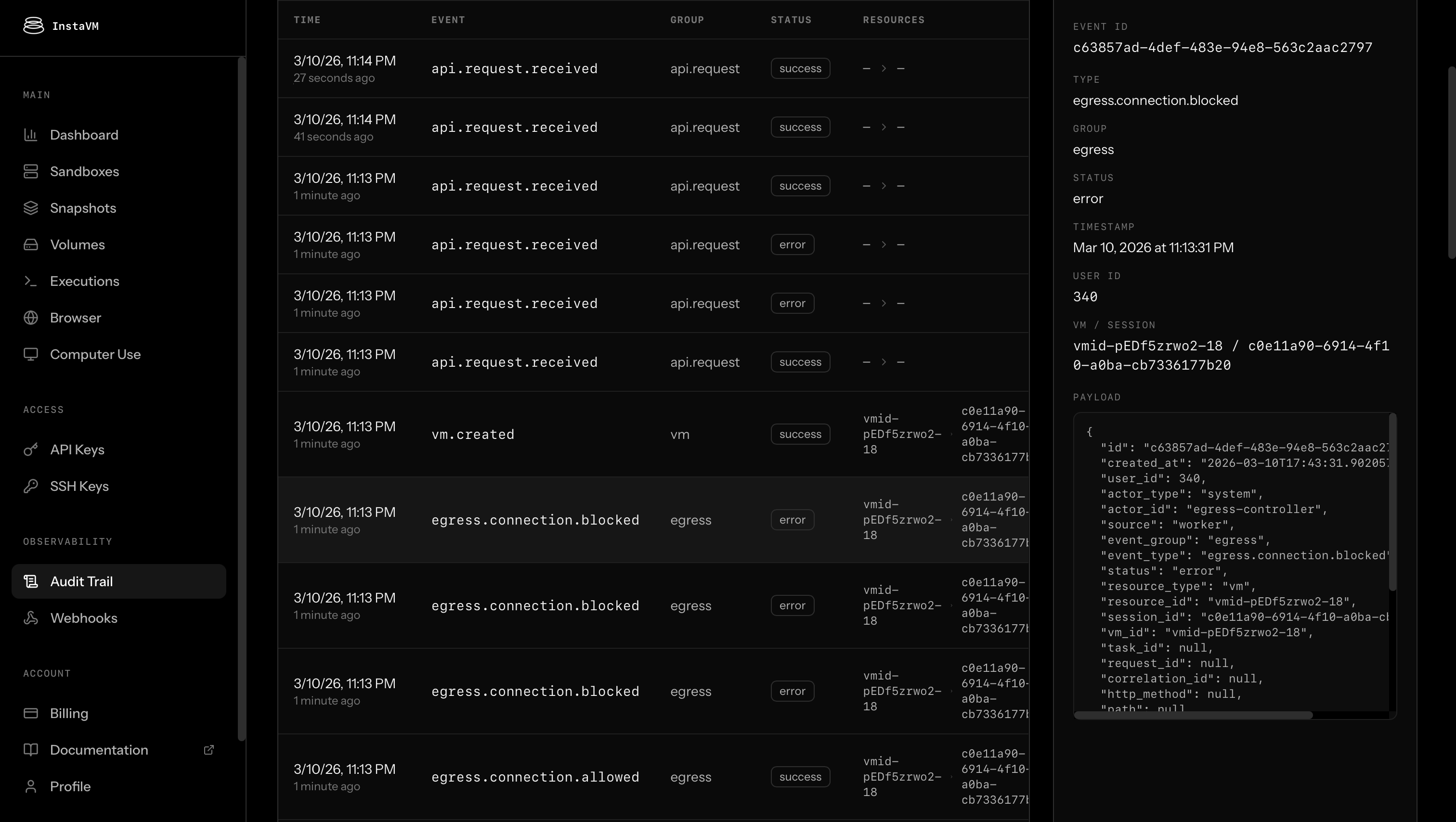

Egress controls. Three modes. Shut off all outbound traffic entirely. Allow pre-built groups (package managers, CDNs, AI API endpoints like OpenAI and Anthropic) with one toggle. Or whitelist specific domains and CIDRs for fine-grained control. Your agent talks to three APIs? Its runtime should reflect that, not have open access to the entire internet.

Proxy-based secret injection. Credentials are injected by the proxy at request time. They never enter the VM. Even if an agent's code is compromised, the secrets aren't there to steal. Most platforms pass secrets as env vars inside the runtime. We keep them outside. We're testing this now and it will be live soon.

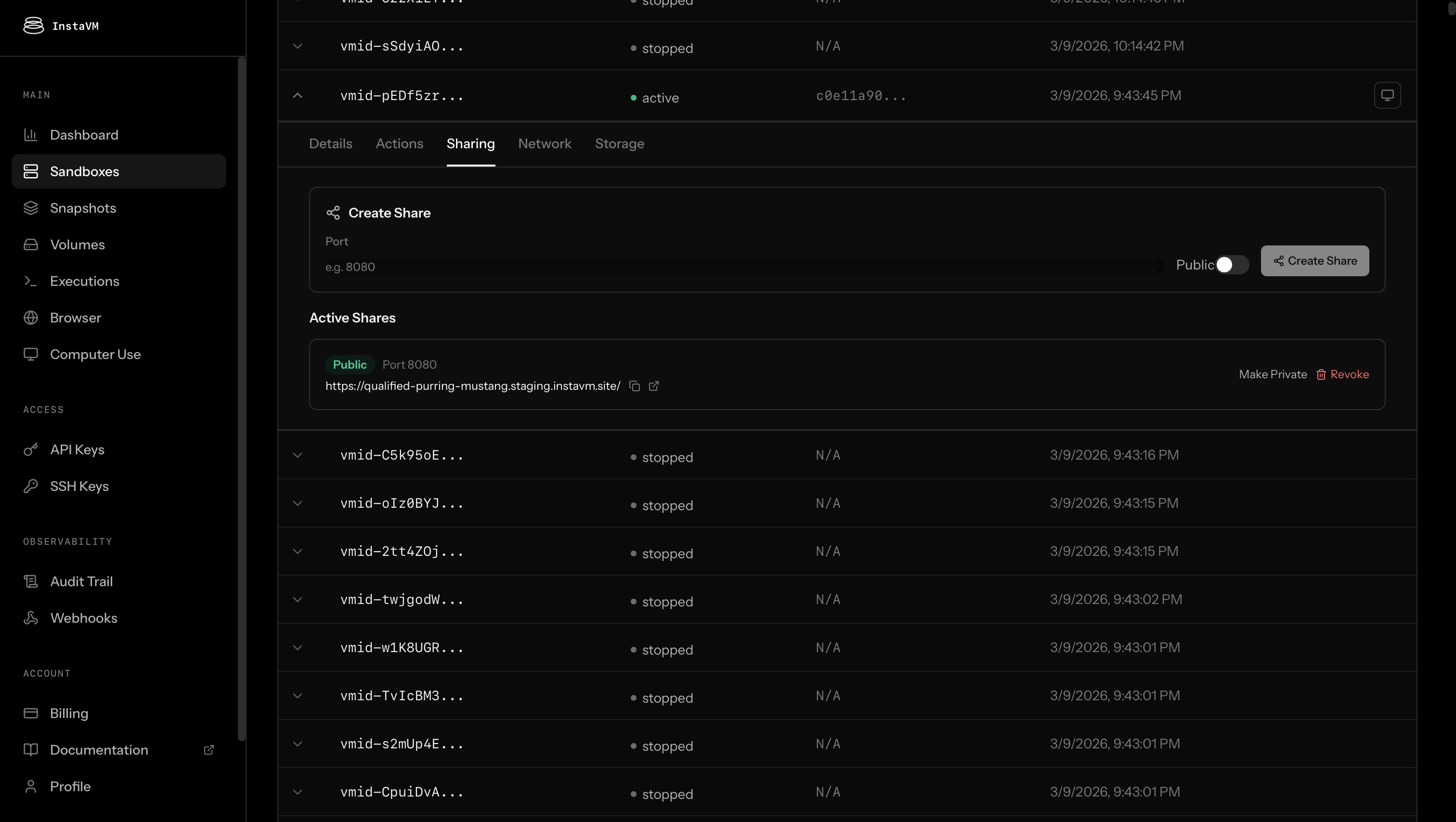

Shares. Expose any VM port as a URL with TLS. Public or private, your choice. Attach a custom domain when a preview needs a real address. Your agent builds something (a web app, an API, a dashboard) and it's live in one call.

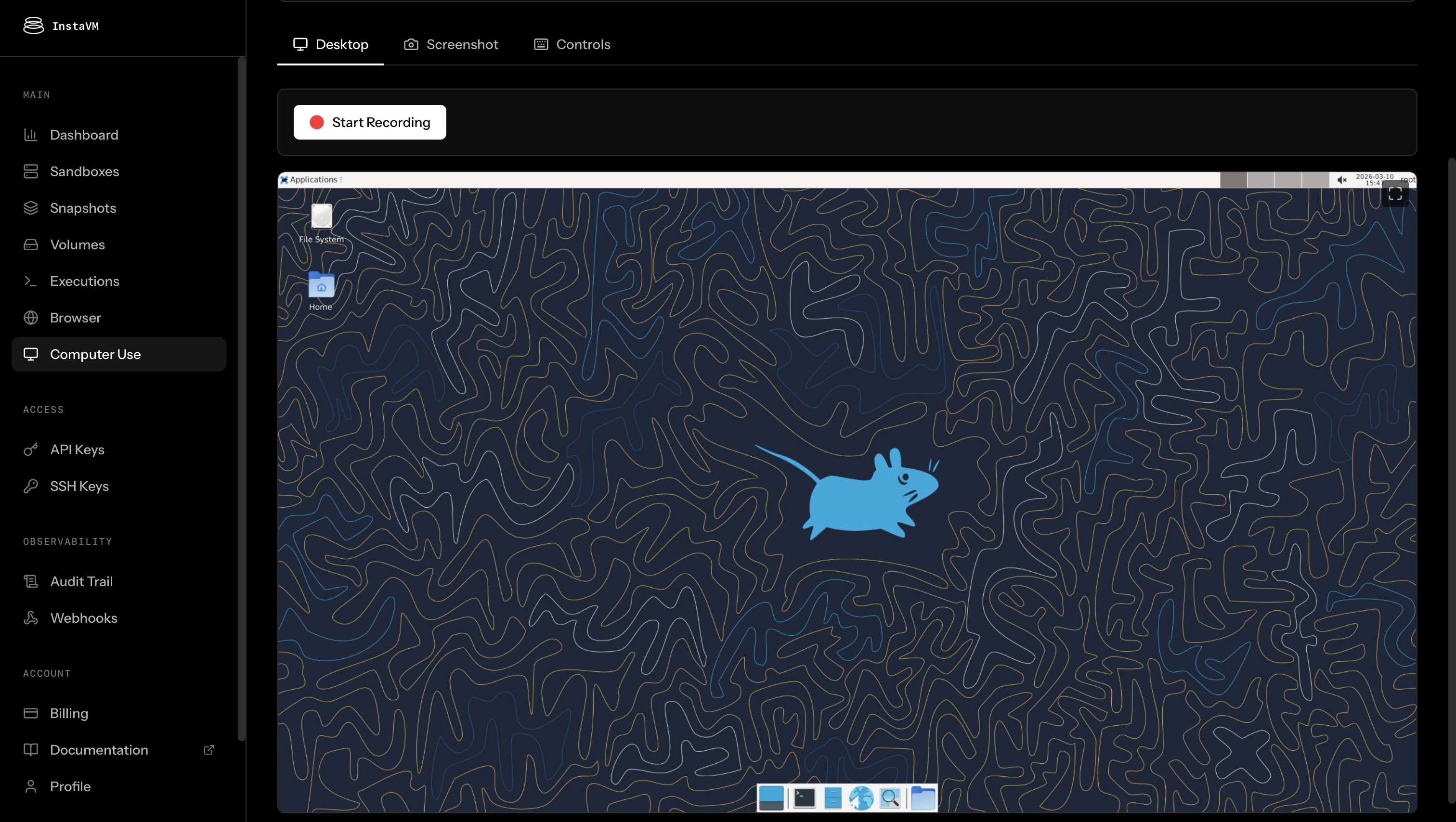

Browser and desktop automation. Full browser sessions: navigate, click, fill forms, take screenshots. Full Linux desktop for computer-use agents, with a VNC viewer so you can watch live or take over at any point.

Observability that's actually useful. Every action your agent takes is logged. Every code execution, every network request, every file operation, every policy decision. Granular, queryable, filterable. For computer-use VMs, full screen recordings capture exactly what the agent did, frame by frame. When something goes wrong, you don't guess. You watch the replay. When a client or compliance team asks what the agent actually did, you show them. This is the difference between running agents and operating them.

Webhooks. Push events to Slack, GitHub, Linear, or anywhere else. Signed payloads, retries, replay. Agent execution feeds into your existing ops workflow instead of being a black box.

SSH as a first-class interface. Create, shell in, clone, share, all from your terminal:

ssh instavm.dev create --vcpu 4 --memory 8192

ssh abc123@instavm.dev

ssh instavm.dev clone abc123

ssh instavm.dev share create abc123 8080 --publicSDKs for Python and TypeScript. Bring any LLM or framework, local or remote. LangGraph, Claude Agents SDK, DSPy, whatever you're building with. We're runtime, not inference.

Local development with CodeRunner. Our open-source implementation (github.com/instavm/coderunner) runs on your Mac using Apple Containers. Same API as cloud InstaVM. Build locally, deploy to cloud, no code changes. Works with Claude Desktop, Gemini CLI, and any MCP-compatible client.

Deploy from your coding agent. Use the SDK directly, wire up MCP tools, or install the InstaVM skill for Claude Code and other agents that support it. Your agent writes the code, tests it, and deploys it to a live VM without leaving the conversation. No copy-pasting, no context switching.

The gap between "my agent works" and "my agent runs in production" is almost entirely infrastructure. Isolation, networking policy, credential management, logging, screen recording, deployable URLs. That's the grunt work we handle so you can focus on the agent.

Create an account at instavm.io, add your SSH key and just:

ssh instavm.devOr create an API key and use our SDKs.